Jul 07, 2020

Jon Loyens

Chief Data & Strategy Officer & Co-Founder

Long before starting data.world, I was enamored with creating data-driven cultures. We founded data.world to help the world adopt and improve data-driven decision-making and data literacy. I’ve seen firsthand how this substantially increases both corporate performance and democratization. And I’ve seen countless initiatives stall out or fail to launch, struggling with workflow between data producers and consumers, reuse and reproducibility, data literacy, security, and privacy and correctness concerns.

We need a new way of thinking about and running data analytics programs, starting with a modernized approach to data governance. This is the first in a series of blogs on what we call Agile Data Governance.

In Part One below we'll cover lessons learned from software history. Next we’ll publish Part Two, which profiles stakeholder types and explains the process at a high level. In Part Three we’ll explore how Agile Data Governance removes five key barriers to data-driven culture. And in Part Four, we’ll reveal the Principles of Agile Data Governance.

I sincerely hope you follow the entire series. But if you simply want the TL;DR, here it is:

Enterprises waste millions of dollars on failed data initiatives because they apply outdated thinking to new data problems. This results in overly-complex, rigid processes that benefit the few and make the rest of us less productive.

Agile Data Governance adapts the best practices of Agile and Open software development to data and analytics. It iteratively captures knowledge as data producers and consumers work together so that everyone can benefit.

We believe this methodology is the fastest route to true, repeatable return on data investment.

People have touted the advantages of being data-driven, the “big data revolution,” and ML/AI for years. Very few organizations make it to that promised land. Most end up stuck on a treadmill of building out new infrastructure or deploying the shiniest new self-serve BI or data science tool. But few big data initiatives earn adoption. This is because creating a data-driven culture is not a technology problem. It’s a people problem. Many before me have made this important point.

The data and analytics industry today reminds me a lot of the time before the dot-com crash. Back then every company thought they had to transform into a tech company. Today, it’s rare to meet a company that doesn’t, at some level, consider themselves a big data company.

Twenty-five years ago “tech-native” companies were eating the world, and every other company raced to get online. They hired teams of engineers, contractors, and consultants. Vendors rushed in to “support” them with all types of expensive technology they hyped as silver bullets. But most software projects went nowhere. According to a 1995 report from The Standish Group, 31% of projects were canceled before completion, while only 16% of projects were “completed on-time and on-budget.” These failures, wasting valuable time and money, were just as responsible for the dot-com crash as the hubris and mismanagement of the dot-coms themselves.

From the ashes emerged a new way of thinking about software engineering and project delivery. Two key movements changed everything: Agile and Open Source.

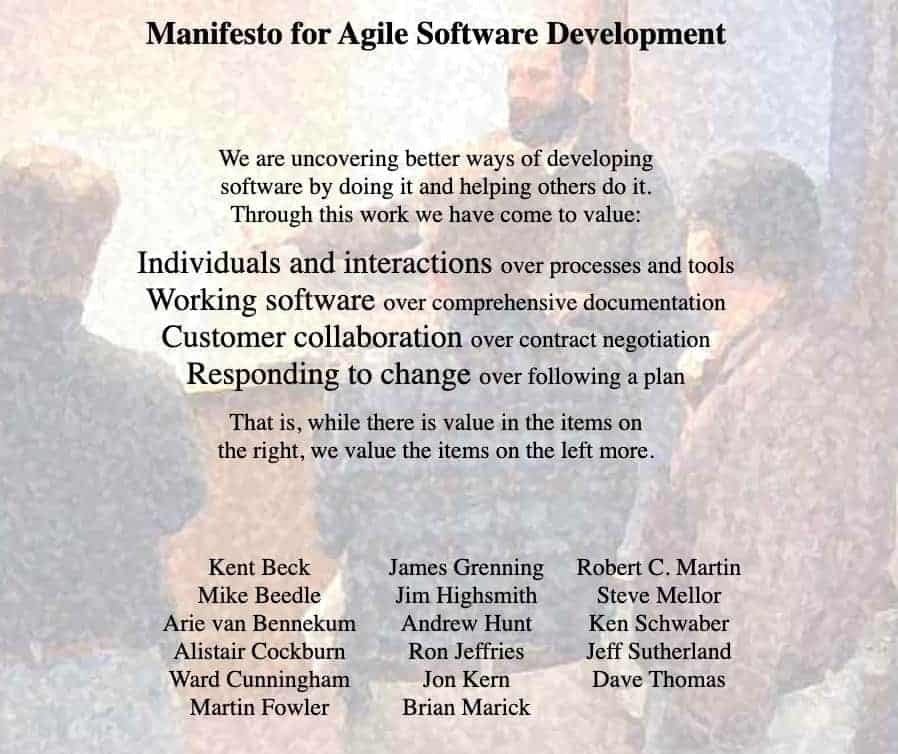

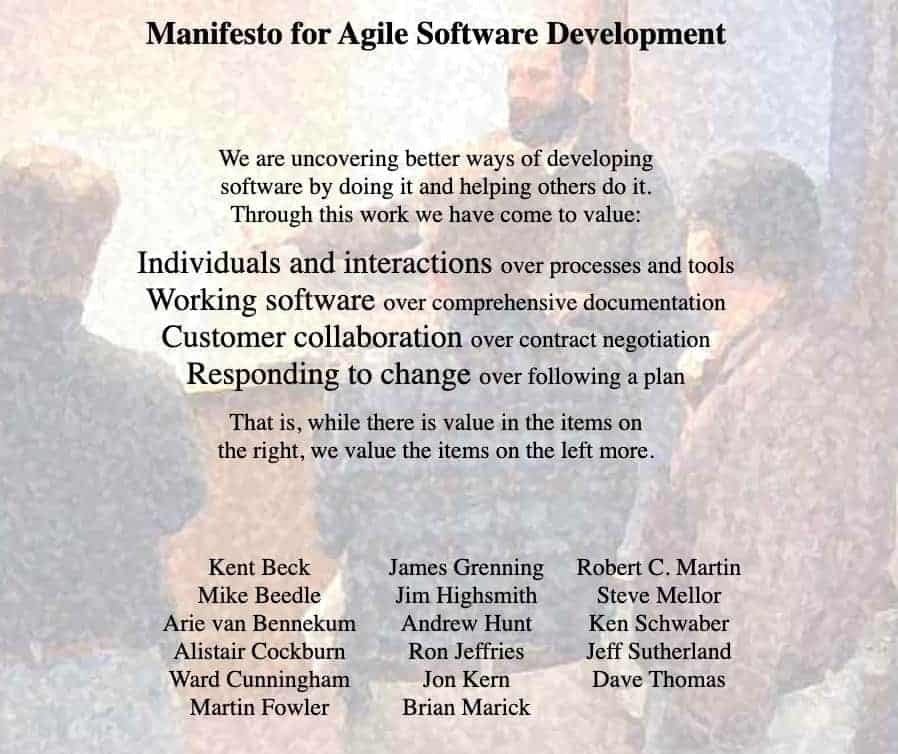

Agile took direct aim at the overruns that plagued the “waterfall”, top-down style development that was the era’s norm. The Agile Manifesto is simple:

The manifesto and its companion principles spawned many official and formalized "processes.” But whether you do Scrum, Kanban, or something else, the basics endure. Put people and iterative, inclusive delivery ahead of elusive concepts like “completeness.” Harness the expertise of users and stakeholders in near real-time. Apply that collective knowledge with each iteration to deliver a better product that people actually use.

Open source also played a major role in changing software delivery. It gave everyone access to incredible technology such as Linux and Postgres. But more subtly, and more significantly, open source changed how teams build software.

People were skeptical: was it as reliable and secure? Turns out, open source was and is more reliable and secure because of the rigor and inclusivity of its community-driven review processes, compared to the traditional top-down, closed models. Additionally, by operating in the open, software developers have become more skilled and literate in the craft. Open source projects spawned peer-review, test-driven development, continuous integration and deployment—techniques that every software engineering team worth their salt use today.

If you're trying to hire high-caliber software engineers today and you don't use agile processes or open-source best practices...good luck with that!

Data and analytics leaders must think about this as they try to build data-driven cultures. While waterfalls are a thing of the past in most software development operations, the top-down approach is still alive and well in data and analytics.

The hard truth is that it’s impossible to build data-driven cultures under waterfalls.

You won’t gain adoption within your organization if you don’t bring your community along for the ride. The best data-driven companies like Netflix, Lyft, and Airbnb started with culture and process and then, true to Agile and Open, built or adopted tools that support inclusive contribution and data literacy.

But many vendors are still thoroughly tops-down and closed. They’re designed for a decades-old waterfall culture, but sold as modern solutions. These companies will never use words like “waterfall,” and they’ll spend millions on marketing to convince you they, too, are built for today. But when the sales cycle ends, the agile and open paint jobs start peeling. And you’ll soon realize the expensive thing you’ve bought isn’t a solution at all, but yet another ineffective, technology-before-people stab at fixing your business problems. All that, and you’ll be no closer to the data-driven culture your company needed yesterday.

Enter Agile Data Governance.

Agile Data Governance is the process of creating and improving data assets by iteratively capturing knowledge as data producers and consumers work together so that everyone can benefit. It adapts the deeply proven best practices of Agile and Open software development to data and analytics.

Click here for Part 2, where we profile stakeholder types and explain the process at a high level.

Long before starting data.world, I was enamored with creating data-driven cultures. We founded data.world to help the world adopt and improve data-driven decision-making and data literacy. I’ve seen firsthand how this substantially increases both corporate performance and democratization. And I’ve seen countless initiatives stall out or fail to launch, struggling with workflow between data producers and consumers, reuse and reproducibility, data literacy, security, and privacy and correctness concerns.

We need a new way of thinking about and running data analytics programs, starting with a modernized approach to data governance. This is the first in a series of blogs on what we call Agile Data Governance.

In Part One below we'll cover lessons learned from software history. Next we’ll publish Part Two, which profiles stakeholder types and explains the process at a high level. In Part Three we’ll explore how Agile Data Governance removes five key barriers to data-driven culture. And in Part Four, we’ll reveal the Principles of Agile Data Governance.

I sincerely hope you follow the entire series. But if you simply want the TL;DR, here it is:

Enterprises waste millions of dollars on failed data initiatives because they apply outdated thinking to new data problems. This results in overly-complex, rigid processes that benefit the few and make the rest of us less productive.

Agile Data Governance adapts the best practices of Agile and Open software development to data and analytics. It iteratively captures knowledge as data producers and consumers work together so that everyone can benefit.

We believe this methodology is the fastest route to true, repeatable return on data investment.

People have touted the advantages of being data-driven, the “big data revolution,” and ML/AI for years. Very few organizations make it to that promised land. Most end up stuck on a treadmill of building out new infrastructure or deploying the shiniest new self-serve BI or data science tool. But few big data initiatives earn adoption. This is because creating a data-driven culture is not a technology problem. It’s a people problem. Many before me have made this important point.

The data and analytics industry today reminds me a lot of the time before the dot-com crash. Back then every company thought they had to transform into a tech company. Today, it’s rare to meet a company that doesn’t, at some level, consider themselves a big data company.

Twenty-five years ago “tech-native” companies were eating the world, and every other company raced to get online. They hired teams of engineers, contractors, and consultants. Vendors rushed in to “support” them with all types of expensive technology they hyped as silver bullets. But most software projects went nowhere. According to a 1995 report from The Standish Group, 31% of projects were canceled before completion, while only 16% of projects were “completed on-time and on-budget.” These failures, wasting valuable time and money, were just as responsible for the dot-com crash as the hubris and mismanagement of the dot-coms themselves.

From the ashes emerged a new way of thinking about software engineering and project delivery. Two key movements changed everything: Agile and Open Source.

Agile took direct aim at the overruns that plagued the “waterfall”, top-down style development that was the era’s norm. The Agile Manifesto is simple:

The manifesto and its companion principles spawned many official and formalized "processes.” But whether you do Scrum, Kanban, or something else, the basics endure. Put people and iterative, inclusive delivery ahead of elusive concepts like “completeness.” Harness the expertise of users and stakeholders in near real-time. Apply that collective knowledge with each iteration to deliver a better product that people actually use.

Open source also played a major role in changing software delivery. It gave everyone access to incredible technology such as Linux and Postgres. But more subtly, and more significantly, open source changed how teams build software.

People were skeptical: was it as reliable and secure? Turns out, open source was and is more reliable and secure because of the rigor and inclusivity of its community-driven review processes, compared to the traditional top-down, closed models. Additionally, by operating in the open, software developers have become more skilled and literate in the craft. Open source projects spawned peer-review, test-driven development, continuous integration and deployment—techniques that every software engineering team worth their salt use today.

If you're trying to hire high-caliber software engineers today and you don't use agile processes or open-source best practices...good luck with that!

Data and analytics leaders must think about this as they try to build data-driven cultures. While waterfalls are a thing of the past in most software development operations, the top-down approach is still alive and well in data and analytics.

The hard truth is that it’s impossible to build data-driven cultures under waterfalls.

You won’t gain adoption within your organization if you don’t bring your community along for the ride. The best data-driven companies like Netflix, Lyft, and Airbnb started with culture and process and then, true to Agile and Open, built or adopted tools that support inclusive contribution and data literacy.

But many vendors are still thoroughly tops-down and closed. They’re designed for a decades-old waterfall culture, but sold as modern solutions. These companies will never use words like “waterfall,” and they’ll spend millions on marketing to convince you they, too, are built for today. But when the sales cycle ends, the agile and open paint jobs start peeling. And you’ll soon realize the expensive thing you’ve bought isn’t a solution at all, but yet another ineffective, technology-before-people stab at fixing your business problems. All that, and you’ll be no closer to the data-driven culture your company needed yesterday.

Enter Agile Data Governance.

Agile Data Governance is the process of creating and improving data assets by iteratively capturing knowledge as data producers and consumers work together so that everyone can benefit. It adapts the deeply proven best practices of Agile and Open software development to data and analytics.

Click here for Part 2, where we profile stakeholder types and explain the process at a high level.